/dev/null

... cause mpenkov.blogspot.com is taken

Wednesday, September 21, 2016

Выборы, выборы...

Не хочу претендовать на какие-то лавры в математике или статистике, это не моя тема. Но собирать данные из Интернета я умею, и делиться ими в открытом доступе - тоже. Так что если что не так, смотрите исходники, правьте баги и пишите мне. Итак, начнём.

Для начала — вот данные о выборах с сайта Центризбиркома. Не очень доступно, но желающий там всё найдёт. Я выполнил всю нудную работу за Вас и вытащил эти данные в JSON. Что-то не так? Смотрите исходники, правьте баги, пишите мне. Я всё поправлю.

В руках у нас данные, что дальше? Давайте нарисуем те кривые, которые нарисовал слон.ру, "математически доказав", что выборы фальсифицированы. На горизонтальной оси — процент, набранный каждой из партий. На вертикальной — сумма голосов с участков с указанным процентом. Что мы наблюдаем? Не совсем получилось воспроизвести те кривые, что у слон.ру. Они немного похожи — ЕР лидирует с явным отрывом от остальных трёх партий. Только максимума ЕР достигает лишь на отметке 15%, а не выше. Дальше — строго по убывающей, никакой второй горки, как у слон.ру, не наблюдается. Кривая ЕР заметно отличается от кривых других партий — у распределения заметно длиннее правый хвост. При этом стоит заметить, что ни одна из кривых не похожа на естественное распределение (гауссиану). Я до сих пор не могу понять, почему слон.ру утверждает, что распределение должно быть естественным. Не мешало бы это объяснить, вместо того, чтобы констатировать это явление как научный факт и явный показатель фальсификаций.

Ладно, с первым набором кривых у меня не получилось. Может быть, это я накосячил. Помните исходники? Смотрите, правьте баги, я всё исправлю.

Теперь давайте второй набор кривых. Ось икс — явка на избирательном участке. Ось игрик — количество голосов с участка с данной явкой. Рисуем кривые — похоже, но не совсем. Безусловно, ЕР лидирует (а как же иначе). Первый горб где-то на 37% явки — тут всё совпадает. Второй горб тоже есть, но он не настолько значителен. Только у слон.ру это даже не горб, а горка, без убывающей. Не знаю, чем объяснить эти разногласия. Предполагаю, что слон.ру брал исходные данные у Центризбиркома, как и я — а где же ещё? Данные и метод их выкачивания я публикую в открытом виде, если я что-то прописал не так, то знающие люди увидят и поправят. То же самое и с рисованием кривых. У слона.ру не видно ни того, ни другого.

Не мешало бы выложить исходные данные, с которых рисовались кривые. И исходники тоже. Делать какие-то выводы о выборах вне моей компетенции. Но давайте делиться исходными данными, так всем будет лучше.

Tuesday, November 5, 2013

The blog has moved!

I've moved the blog to a new server. It now lives on my very own personal domain. The old blog will still be available, but I won't be writing there anymore.

For those that are interested, the new blog is hosted on GitHub Pages. The posts are written in simple markdown and stored in a git repository. Each time the repository is pushed, GitHub uses Jekyll to generate a static website from the posts. All this makes posts easy to write, edit and publish, and keeps everything under version control. You can see the source code for this blog here.

The migration from BlogSpot was relatively painless. Jekyll has migration recipes for a variety of existing providers. There are several alternatives for BlogSpot - this one worked for me. It keeps the posts as HTML, as opposed to markdown. This isn't really a problem for Jekyll, since it handles HTML without any problems; it's just a pain to edit HTML. Thanks, @ngauthier! Getting the LaTeX equations to display correctly was also relatively straightforward.

I also migrated my Russian-language blog to misha.penkov.id.au. Unfortunately, there was no LiveJournal importer for Jekyll, so I wrote my own.

Monday, September 16, 2013

Automatic Speech Recognition with Google

(Yes, this video is already 2 years old!) This component utilizes a Web service to do the actual speech recognition. The Web service isn't available officially, but can be accessed without Chrome to perform some quick speech recognition. Here's a script that does the work for you:

The script is mostly based on existing work by Sunil. I had to modify a few things to get it working:

- Fix the fancy quotation marks

- Fix the wget line (it wasn't outputting to the correct file)

Monday, April 22, 2013

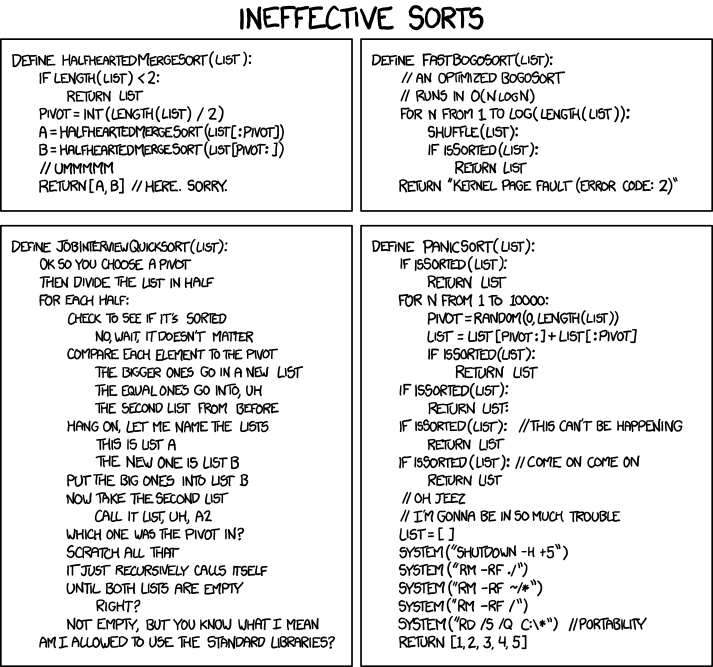

Coding Practice: Quicksort

Intuitive Description

"Just grab a thing and compare the other things with it."The trick is that Quicksort "grabs" and "compares" intelligently, avoiding unnecessary comparisons and allowing it to sort a collection in logarithmic time. Specifically, this is achieved by partitioning the collection around the thing we just grabbed (called the "pivot") into two smaller collections. Everything smaller than or equal to the pivot goes into the left sub-collection, and everything else goes into the right sub-collection. The two sub-collections can then be Quicksort-ed independently, recursively. The recursion terminates when the sub-collections contain less than two elements.

Code and Analysis of Computational Complexity

The code for Quicksort is fairly straightforward:

Most of the work is performed in the partition method, which can be implemented in-place.

The computational complexity of Quicksort depends on the selection of the pivot element. In the best case, the selected pivot is the median of the collection and the partition step divides the collection into two smaller collections of identical size. Since the size of the sorted collection is halved at each step of the recursion, the best case complexity of Quicksort is $O(N \log N)$. In the worst case, the selected pivot is the minimum or maximum of the collection, and the partition step achieves very little. The worst case complexity is $O(N^2)$.

There are several ways to select the pivot element, the simplest being selecting the first, last or middle element of the collection. Since selecting the first or last element can lead to worst-case performance if the array is already sorted, selecting the middle element is the better option of the three.

The effect of pivot selection on the complexity of Quicksort can be observed empirically, by counting the number of comparisons for three different types of input: random, sorted and uniform; and for three different pivot selection methods: first, last and middle. Here are some results (sorting 100 input elements, showing the number of comparisons first, last, middle selection modes, respectively):

- random (mean over 100 runs): 713.28, 715.17, 713.25

- sorted: 1001, 1001, 543

- same: 1001, 1001, 1001

Comparison with Mergesort

- First, Quicksort does all of its work in the divide (partition) step. The conquer step is trivial, since after recursion is complete, the array is completely sorted. In contrast, Mergesort does very little work in the divide step, and does most of its work after the recursion is complete.

- Second, the algorithms have different computational complexity: Mergesort is consistent $O(N \log N)$, Quicksort is $O(N \log N)$, $O(N \log N)$ and $O(N^2)$ in the best, average and worst-case, respectively.

- Third, the algorithms have different space complexity: unlike Mergesort, Quicksort's partition step can be implemented in-place without significant impact on complexity.

- Fourth, unlike Mergesort, Quicksort is not a stable sorting algorithm, since the partition step reorders elements. Stable implementations of Quicksort do exist, but are not in-place.

- Finally, Mergesort is easier to parallelize than Quicksort, since the divide step is simpler with the former.

Conclusion

Monday, April 8, 2013

An (unexpected) defense of Microsoft Store...

While they failed to obtain my business for the University 365 offer (a blunder that they will surely regret for decades), a quick read of the MS Office license revealed that, as a proud owner of the Home and Student version, I can install it on one more PC. Which is what I promptly did (can't beat free!).

Unfortunately, the smooth sailing ended here. In blind defiance of the above-mentioned license, the installed program refused to authenticate, and threatened to disable itself within a month if I did not provide it with a new license key, which, as we all know, costs bags of money. Having read the The License, I was fully confident in my self-righteousness. There was no way I was going to pay for something that was already mine. I did the unthinkable. I picked up my phone and called the Verification Hotline.

The Verification Hotline is the last resort for people that want to authenticate a Microsoft product, but for one reason or another can't do so over the Internet. It was well after 7pm when I called, so I half-expected to be kindly asked to call back the next day. Fortunately, these expectations were misplaced, and I was treated to a warming chat with... a computer. To proceed with verification, you need to enter something like 64 digits (through the keypad!) to identify your install. It's difficult to convey the rush of adrenaline as you power towards the last couple of digits. I've never diffused a bomb, or issued a launch code for an ICBM, but I guess those experiences would come pretty close.

After all that, I got through to an operator. Finally, a chance to plead my case... in Japanese. Great. After a long and thorough discussion about when and how I installed The Product, the operator agreed to activate my installation. To do that, I had to enter another missile launch code into my Office install, as she was reading it out. Another 64 digits or so, and my efforts would finally bear fruit.

My call got cut off after 10 digits. Game over, man!

Unable to control the fury, I redialed the number, and mashed the keypad until an option to talk to an operator was presented. I had naively expected that somehow, the person I was talking to before would be there, and we could pick up where we left off... Alas, that was not to be. The voice on the other side of the phone was cold and distant. "I'm afraid you'll have to start again...", she said apologetically.

Like I mentioned earlier, I'm a fairly persistent guy when I need to be. I persevered. Entering the 64-digit launch code a second time through was nowhere as painful as the first. I had the thought that by the time I'd have gone through the process another 3 or 4 times, I'd have the whole thing memorized. It's really no big deal -- back in the good old days of Windows 95, I reinstalled the O/S so often I had the whole product key committed to memory.

While what I've written so far doesn't really do much in defense of the Microsoft Store, there really is a happy ending to all this. After I entered my launch code a second time, I didn't have to jump through any more hoops. The kind soul I spoke to the first time through pre-recorded the authorization code for me, and all I had to do was punch that into my Office install. All done! And it only took half an hour...

Furthermore, I had to recently stumble into The Store on an unrelated issue. I was surprised to see something that I don't recall seeing before -- a live chat option. You click on that, and get to talk to a real person. Straightaway. It's brilliant! If only that was there a week ago -- I wouldn't have had to rant. Oh well. Better out than in, they say.

Thursday, April 4, 2013

The Decimator, 2.0

http://mpenkov.github.com/decimator/

It accepts free text input, and handles both traditional (Kanji only) and mixed (Arabic numerals plus Kanji) numbers. Feel free to give it a whirl.

Thursday, March 28, 2013

Surviving Customer Support

I'm trying to get my hands on an install of MS Office 2013 (well, I really only need PowerPoint to do my presentations, and Word to occasionally read files sent by people who don't know better). Since I'm an honest individual, I decide to do the unthinkable and actually pay for Microsoft software. However, I'm also a student, so I'm looking for a way to pay the least amount possible and still keep a clear conscience. It appears the best option is the University 365 deal that's exclusive to full-time students. The catch: they have to verify you're a student before you can purchase or even download anything. This is where the fun begins.

You get three options for verification: through your school, an ISIC card, or manually typing a verification code into a text box. The first option sounds like the best, except it doesn't work: you get redirected to your school (in my case, Hokkaido University), you enter a username and password, and... get redirected to the start page. One option down, two to go. No, scratch that, I don't have an ISIC card. Well, that pretty much leaves one option -- get in touch with support and ask for a verification code. Sounds easy, huh?

Actually, it isn't. Go to the MS store and search for a way to contact their support team. Bonus question: try to find a way that doesn't involve picking up a telephone. Go on, I'll wait.

Back already? Did you find an email? Let me know if you did, cause I didn't. As a consolation prize, I found a chat option... that I can't use right now because it's outside of US business hours. Seriously? Is it really that hard to foresee that not everyone is going to be in the same time zone as the US? It's almost as if Microsoft haven't heard of this wonderful thing called "eemail", which allows people across the globe to communicate without having to arrange a mutually convenient time.

Alright, let's try a different angle: let's go through the local Japanese MS site. Maybe they have a workable support option. Here's their product lineup. I'll save you the time of having to click through to it: